All Research Projects (most recent first)

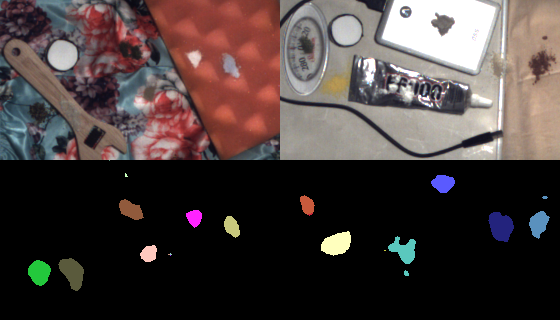

We present the first comprehensive dataset and approach for powder recognition using multi-spectral imaging. By using Shortwave Infrared (SWIR) multi-spectral imaging together with visible light (RGB) and Near Infrared (NIR) and incorporating band selection and image synthesis, we conduct fine-grained recognition of 100 powders on complex backgrounds and achieve reasonable accuracy.

|

In this work, we propose a two-stage near-light photometric stereo method using

circularly placed point light sources (commonly seen in recent consumer imaging

devices like NESTcam, Amazon Cloudcam, etc). Because of the small light source

baseline, the change of image intensity for the input image is small. In

addition, in the near-light condition, the distant light assumption fails. So

the light directions and intensities are not evenly distributed across the

scene. Our proposed method tackles both issues.

|

A 2 parameter family of indirect light transport images captured live include short and long range indirect.

|

We develop a novel deep learning framework to simultaneously transform images across spectral bands and estimate disparity. A material-aware loss function is incorporated within the disparity prediction network to handle regions with unreliable matching such as light sources, glass windshields and glossy surfaces. No depth supervision is required by our method. To evaluate our method, we used a vehicle-mounted RGB-NIR stereo system to collect 13.7 hours of video data across a range of areas in and around a city. Experiments show that our method achieves strong performance and reaches real-time speed.

|

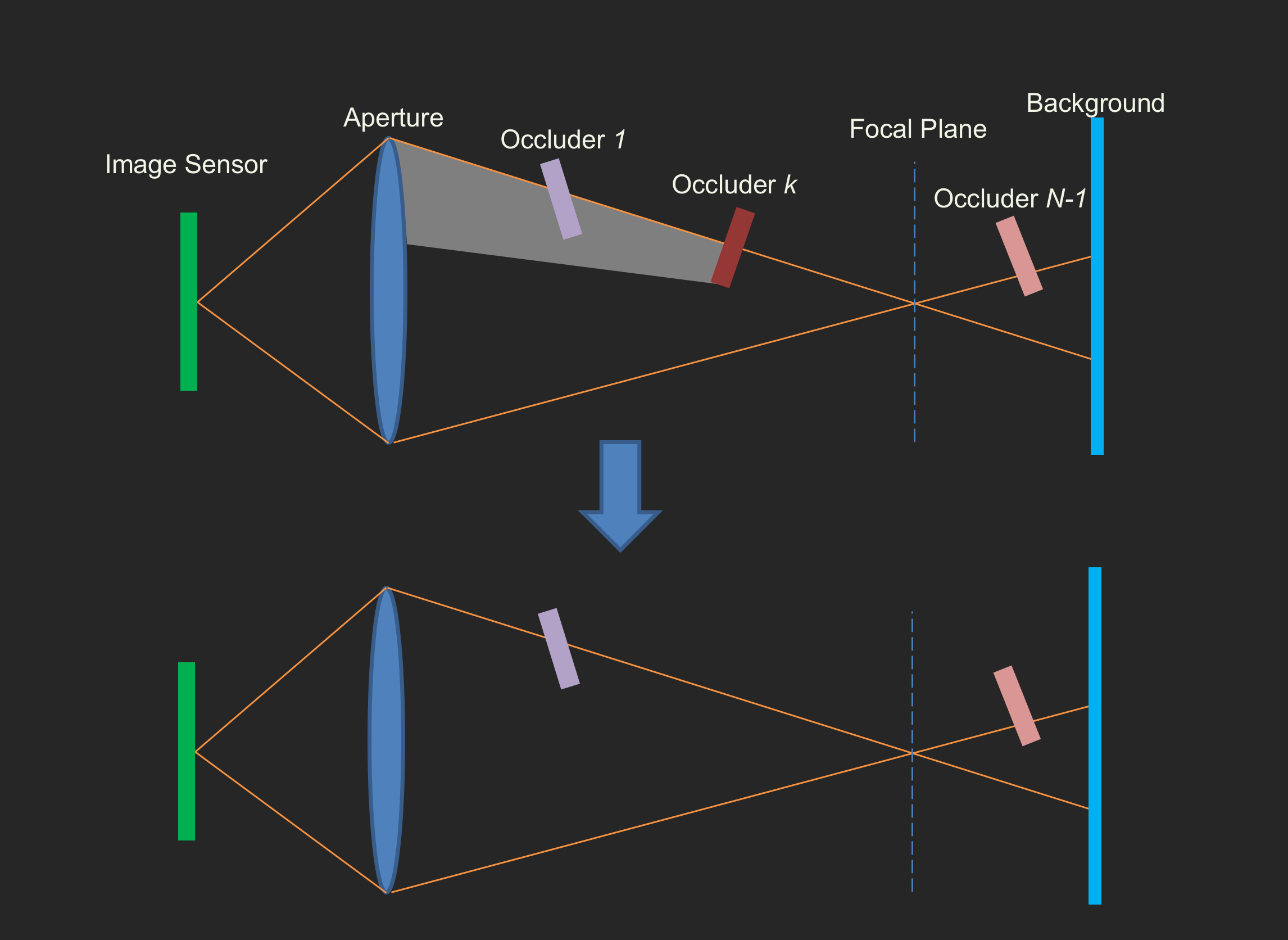

We propose an image formation model that explicitly describes the spatially

varying optical blur and mutual occlusions for structures located at different

depths. Based on the model, we derive an efficient MCMC inference algorithm

that enables direct and analytical computations of the iterative update for the

model/images without re-rendering images in the sampling process. Then, the

depths of the thin structures are recovered using gradient descent with the

differential terms computed using the image formation model.

We apply the proposed method to scenes at both macro and micro scales.

|

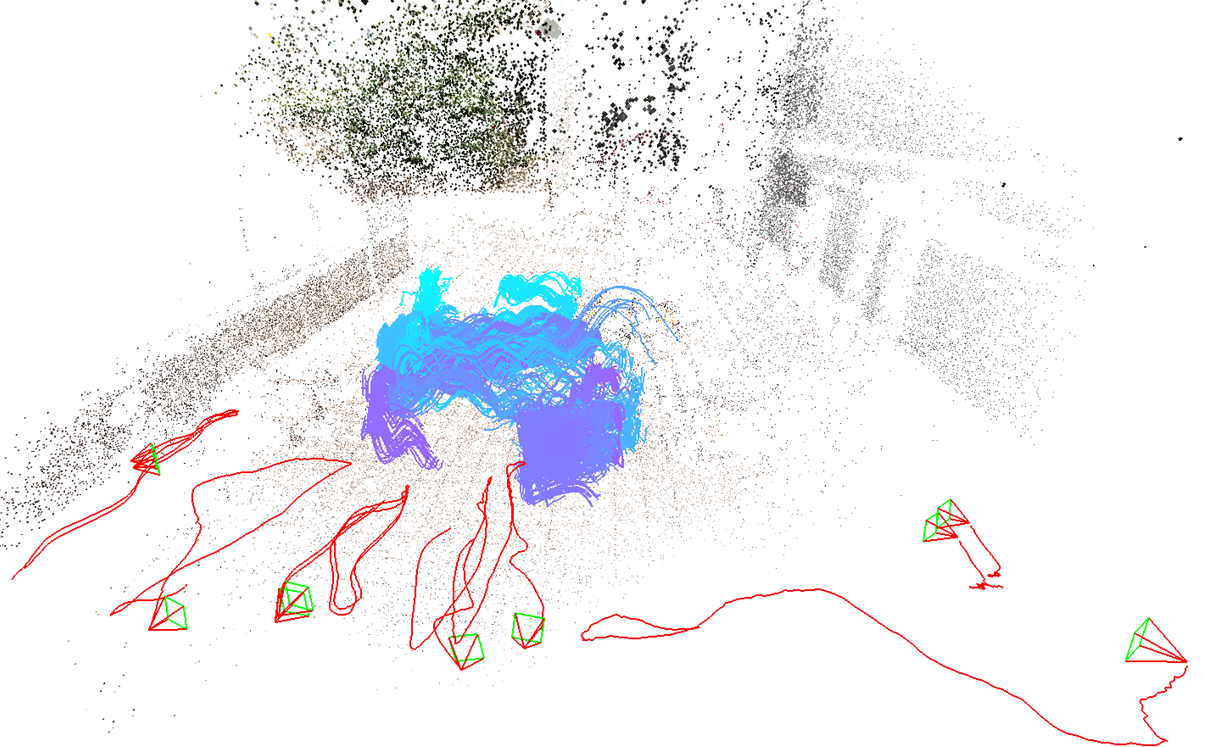

We develop a method to estimate 3D trajectory of dynamic objects

from multiple spatially uncalibrated and temporally unsynchronized cameras.

Our estimated trajectories are not only more accurate and but also have much longer and

higher temporal resolution than previous arts.

|

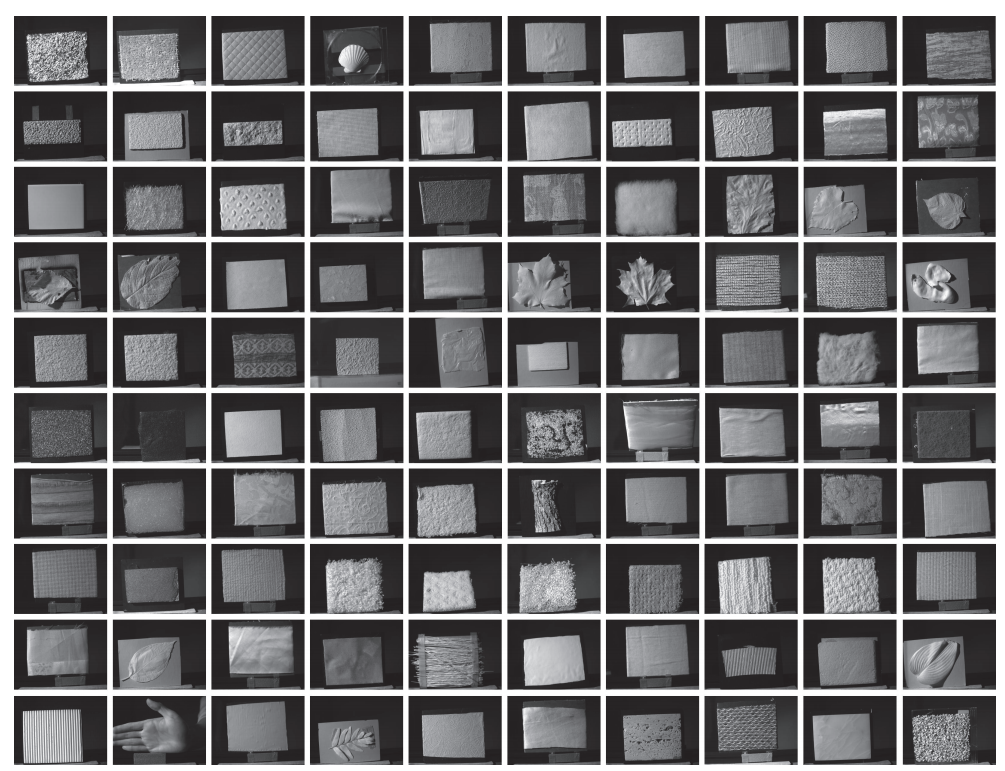

A new dataset that has 100 materials captured under under 12

different NIR lighting directions with 9 different viewing angles. A low-parameter BRDF model (in NIR) and

fine scale geometry of surfaces are estimated simultaneously.

|

The goal is to use a low-latency projector-camera system to intelligently illuminate only the portion of the scene where events are taking place. Unlike traditional lighting, the background is not illuminated resulting in high contrast image and video capture of the event.

|

In this project we develop a light efficient method of performing transport probing operations like epipolar

imaging. Our method enables structured light imaging to be performed effectively under bright ambient light conditions with a low power

projector source.

|

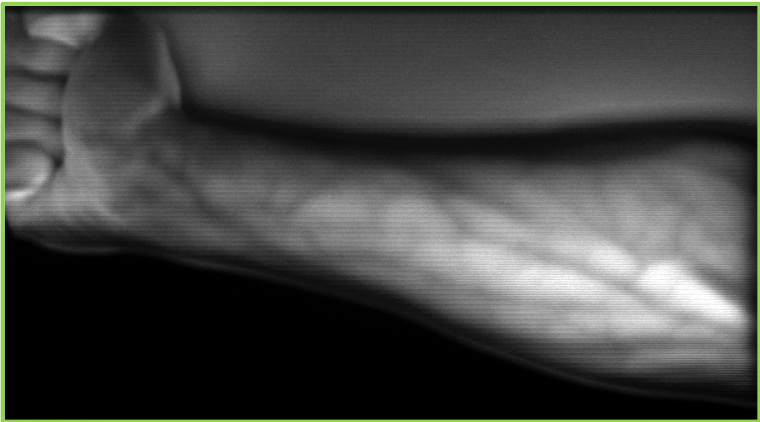

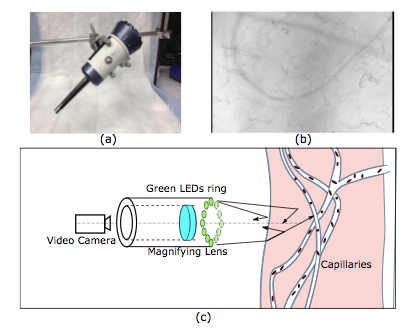

In this project, we develop real time tools to capture and analyze blood circulation in micro vessels that

is important for critical care applications. The tools provide highly detailed blood flow statistics for bedside and surgical care.

|

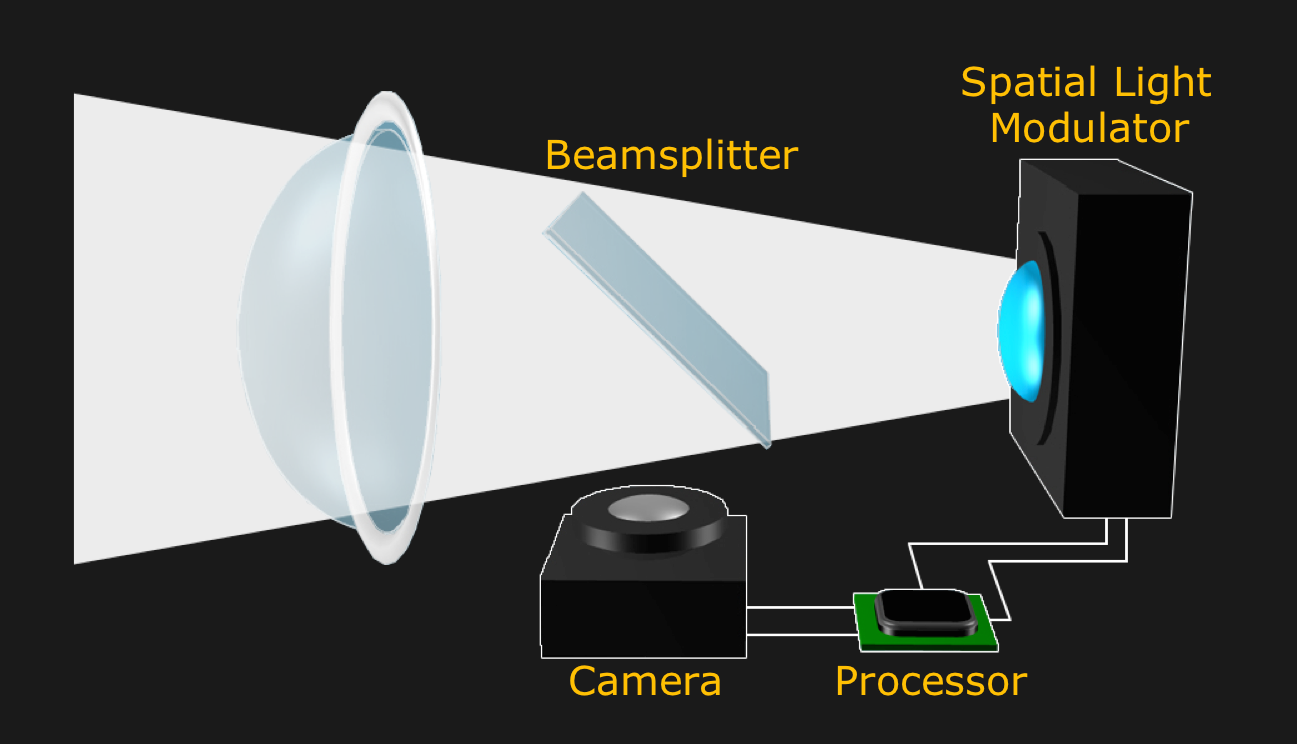

The goal is to build vehicular headlight that can be programmed to perform many tasks with a single

design to improve driver and traffic safety. Example tasks include producing glare free high beams, reducing visibility of rain and snow,

improving visibility of the road and highlighting

obstacles.

|

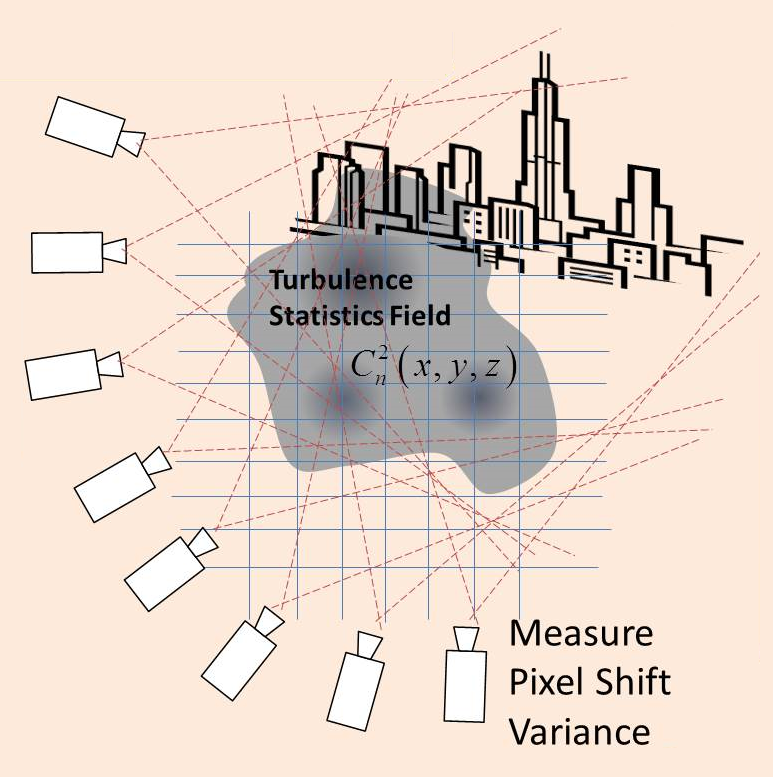

This project explores an inexpensive method to directly estimate Turblence Strength field by using

only passive multiview observations of a background.

|

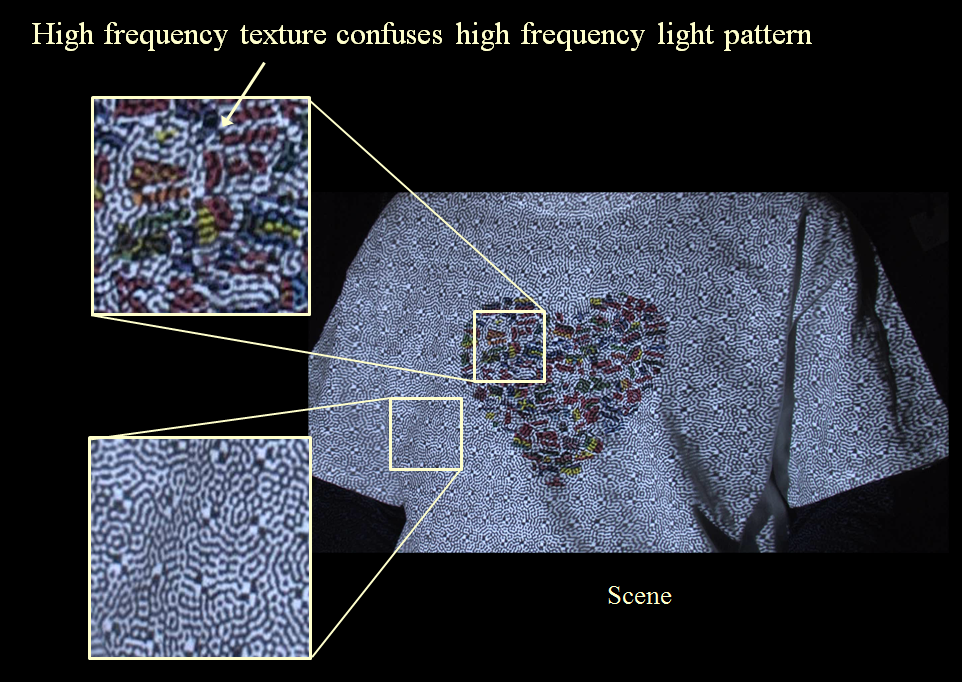

The goal is to allow structured light system to capture dense shape information of highly

textured objects

|

Novel mathematical framework to extend Galerkin projection to

non-polynomial functions with applications to fast fluid simulation and radiosity rendering

of deformable scenes.

|

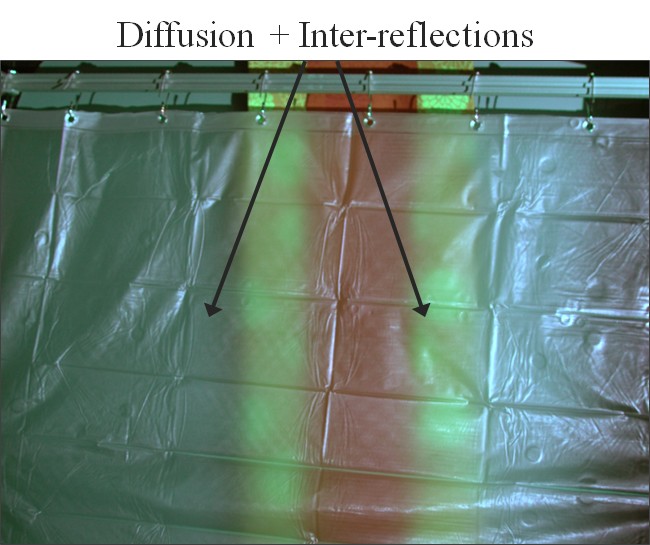

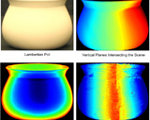

The goal of the project is to efficiently render both the subsurface scattering

of light within objects and the diffuse interreflections between them.

|

The goal is to build a smart vehicular headlight that can

reduce visibility of rain and snow, making it less stressful and more safe for us to drive at night. This website describes

the prototype built in 2011-2012.

|

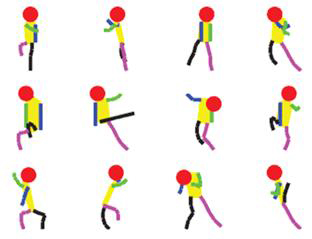

David Marr inspired hierarchical model of part mixtures is used to sample natural

looking human poses. The model is learned from a human image dataset and can be used to estimate

human pose from a single test image.

|

The project aims to model the optical turbulence through hot air and

exploits the model to remove turbulence effects from images as well as recover depth cues in the scene.

|

We have built a structured light sensor for 3D reconstruction that works

in sunlight using an off-the-shelf low-power laser projector.

|

The goal is to recover 3D shapes of complex objects using structured lighting that is robust to

interreflections, sub-surface scattering and defocus.

|

The image of a deformed document is

rectified by tracing the text and recovering the 3D

deformation.

|

Imaging and measurement of crop and canopy in vineyards.

|

By using precisely controlled valves and a projector-camera system, we create a vibrant, multi-layered

water drop display. The display can show static or dynamically generated images on each layer, such as text, videos, or even interactive games.

|

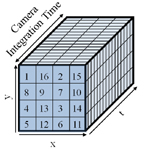

We wish to build video cameras whose spatial and temporal resolutions can

be adjusted post-capture depending on the motion in the scene.

|

Multiple coded exposure cameras are used to obtain temporal super-resolution.

|

The deformation field between a distorted image and the corresponding

template is estimated. Global optimality criteria for the estimation are derived.

|

We have developed a detector to identify shadow boundaries on the ground in low quality consumer photographs.

|

Our goal is to recover scene properties in the presence of global illumination. To this end, we study the interplay between global illumination and the depth cue of illumination defocus.

|

We introduce a new, high-quality dataset of calibrated time-lapse sequences. Illumination conditions are estimated in a

physically-consistent way and HDR environment maps are generated for each image.

|

Given a single outdoor image, we present a method for estimating the likely illumination conditions of the scene.

|

We estimate the shape of the water surface and recover the underwater scene without using any calibration patterns, multiple viewpoints or active illumination.

|

We design a Projector-Camera system for creating a display with water drops that form planar and curved screens.

|

We generalize dual photography for all types of light-sources using opaque, occluding masks.

|

We process photographs taken under DLP lighting to either summarize a dynamic scene or illustrate its motion.

|

We present a framework for fast active vision using Digital Light Processing (DLP) projectors.

|

We analyze two sources of information available within the visible portion of the sky region: the sun position, and the sky appearance.

|

Optimal positioning of light sources and cameras for best visibility in impure waters.

|

We present a practical approach to SFS using a novel technique called coplanar shadowgram imaging, that allows us to use dozens to even hundreds of views for visual hull reconstruction.

|

In this paper, we analyze what kinds of depth cues are possible under uncalibrated near point lighting.

|

We present a novel technique to reconstruct the surface of the bone by applying shape-from-shading to a sequence of endoscopic images, with partial boundary in each image.

|

We present a complete calibration of oblique endoscopes.

|

Removing rain and snow from videos using a frequency space analysis.

|

We present a unified framework for reduced space modeling and rendering of dynamic and non-homogenous participating media, like snow, smoke, dust and fog.

|

Scene points can be clustered according to their surface normals, even when the geometry, material and lighting are all unknown.

|

We have developed a simple device and technique for robustly estimating the properties of a broad class of participating media.

|

We are interested in analyzing and rendering the visual effects due to scattering of light by participating media such as fog, mist and haze.

|

Laser range finding and photometric stereo in impure waters.

|

Estimating visibility and weather condition from light source appearances.

|

We derive a new class of photometric invariants that can be used for a variety of vision tasks including lighting invariant material segmentation, change detection and tracking, as well as material invariant shape recognition.

|

Models and algorithms for recovering scene properties from images captured in fog and haze.

|

Calibrated and HDR time-lapse images of an outdoor scene for a year.

|

This page describes a new technology developed at Columbia's Computer Vision Laboratory that can be used to enhance the dynamic range (range of measurable brightness values) of virtually any imaging system.

|