Projects @ Human-Computer Interaction Institute (HCII) in CMU

Intelligibility in Context-Aware Applications

Applications that behave proactively on a user's behalf, particularly those that react to implicit user context, need to be intelligible to end users, explaining what they are doing and why. This project aims to improve the usability of and trust in context-aware applications, by gaining an understanding of how mental models are formed about context-aware systems, and designing interaction techniques and programming tools that will help application designers make their systems intelligible.

Firefly

Investigated people's reaction time to visual stimuli at 7 placements on the body (wrist, upper arm, shoulder, brooch, waist, thigh, foot). We found that people reacted fastest to the wrist, and slowest to the foot. Our findings would inform others who want to deploy wearable displays on various body locations.

Pediluma

Investigated the wearing of a small light emitting, shoe-mounted prototype display to motivate physical activity. It provides a light to the public to leverage real-time, physical social influence to motivate people.

Projects @ Institute for Infocomm Research (I2R)

Spontaneous Interactions

In the Interactive Media Department, I am currently working on a framework to automatically aggregate various services in smart spaces. Users can bring their wireless-enabled mobile devices, and use their web browsers to explore and employ these services.

I have been involved with this since August 2006, designing and implementing the whole system. Other than the framework, I have also developed a web-based UPnP Media access service that our framework can use.

Pointus

During Summer 2004, I did an internship at the Context-Aware Systems Department. To enable office staff to control displays in conference rooms with their own PDAs, I developed a Java-based remote control interface to control the mouse and keyboard of the computers there. The work extended from Brad Johanson's research on EventHeap and PointRight at Stanford.

Projects @ Cornell University

GroupMeter

To provide more automation for feedback during group collaborations, this project incorporates peer and automatic linguistic feedback with a chat interface. As a collaboration between Cornell University and Parsons, New School for Design, this involved engineers from Cornell and designers from Parsons.

I was involved with this project from Spring to Summer 2006. I developed the AOL Instant Messenger (AIM) chat bot to capture conversations from group chats for processing in our system. The bot can also send messages from the administrator. Moreover, I developed the Flash Remoting bridge between the Flash front-end and J2EE back-end.

Hidden Treasures

For this project, we developed an enhanced mobile tour guide system for the Cornell University Ithaca campus. The goal is to provide an anytime, anywhere cell phone-based tour guide to students and visitors so that they may uncover the past and emerging treasures of Cornell.

I was involved with this project in Fall 2005, working on the Geographic Information System (GIS) to serve maps of the campus to the mobile clients.

Projects @ Nanyang Technological University (NTU)

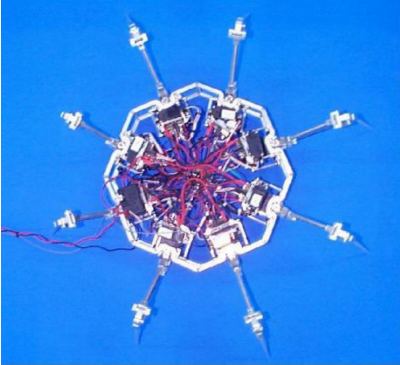

Control of an 8-Legged, 24 DOF, Mechatronic Robot

This project involved programming an 8-legged, 24 DOF, Mechatronic Robot to walk in various gaits to achieve translational and rotational movement, via remote control. It was able to move back and forth, sideways, and make turns, at various speeds.