Group

Ph.D. Students

Alnur Ali

Kirstin Early

Matt Wytock

Recent and Ongoing Projects

Energy Disaggregation

Energy disaggregation, or non-intrusive load monitoring, seeks to predict the energy usage of individual electrical appliances given an aggregated measurement (such as a whole-home electricity monitor). Studies have shown that simply providing such information to a user can automatically produce energy-saving behaviors. Our work in particular focuses on advanced inference methods that look jointly at a home's entire energy signal over time to infer device usage; during this project we have also developed a public data set for experimentation, available at http://redd.csail.mit.edu.

Energy disaggregation, or non-intrusive load monitoring, seeks to predict the energy usage of individual electrical appliances given an aggregated measurement (such as a whole-home electricity monitor). Studies have shown that simply providing such information to a user can automatically produce energy-saving behaviors. Our work in particular focuses on advanced inference methods that look jointly at a home's entire energy signal over time to infer device usage; during this project we have also developed a public data set for experimentation, available at http://redd.csail.mit.edu.

[Related publications:

AISTATS 2012,

SUSTKDD 2011,

NIPS 2010]

Wind Turbine Modeling and Learning Control

Wind energy represents the fastest growing source of renewable energy, but major advances in the deployment and control of wind turbines are needed if wind power is to constitute a significant fraction of electricity production. Our project looks at applying reinforcement learning control approaches to maximize energy production on a small-scale turbine we have built in our lab. The methods do not require accurate a priori models of the system (which can be very difficult to obtain in dynamic or unsteady wind conditions), but instead optimize the control in an online fashion, based only upon interacting with the environment.

Wind energy represents the fastest growing source of renewable energy, but major advances in the deployment and control of wind turbines are needed if wind power is to constitute a significant fraction of electricity production. Our project looks at applying reinforcement learning control approaches to maximize energy production on a small-scale turbine we have built in our lab. The methods do not require accurate a priori models of the system (which can be very difficult to obtain in dynamic or unsteady wind conditions), but instead optimize the control in an online fashion, based only upon interacting with the environment.

[Related publications:

ACC 2012]

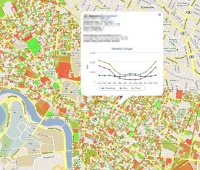

Building Energy Prediction

This project seeks to predict the energy usage of residential and commercial buildings using publicly available data, such as Geographic Information System (GIS) databases and city tax assessor records. The models are useful both for finding "outliers," buildings that consume much more or less energy than predicted, even after regressing on all the know factors, and as a baseline prediction upon which we can build more detailed models. Our current work looks at predicting monthly energy and gas bills over several years for 6,500 buildings in the Cambridge area.

This project seeks to predict the energy usage of residential and commercial buildings using publicly available data, such as Geographic Information System (GIS) databases and city tax assessor records. The models are useful both for finding "outliers," buildings that consume much more or less energy than predicted, even after regressing on all the know factors, and as a baseline prediction upon which we can build more detailed models. Our current work looks at predicting monthly energy and gas bills over several years for 6,500 buildings in the Cambridge area.

[Related publications:

AAAI 2011]

Reinforcement Learning Algorithms and Theory

In conjunction with the application-oriented projects above, we have developed a number of reinforcement learning algorithms, sometimes applied to one of the projects above and sometimes analyzed from a more theoretical perspective.

In conjunction with the application-oriented projects above, we have developed a number of reinforcement learning algorithms, sometimes applied to one of the projects above and sometimes analyzed from a more theoretical perspective.

[Related publications:

NIPS 2011,

ICML 2009a,

ICML 2009b,

ICML 2008]

Past Projects

I led the Stanford Learning Locomotion project, an effort to apply machine learning algorithms to the task of controlling a quadruped robot over extreme terrain. Using these techniques we have succeeding in crossing a wide variety of rough, extremely challenging, and previously unseen terrain. For more information, see the project page.

I led the Stanford Learning Locomotion project, an effort to apply machine learning algorithms to the task of controlling a quadruped robot over extreme terrain. Using these techniques we have succeeding in crossing a wide variety of rough, extremely challenging, and previously unseen terrain. For more information, see the project page.

[Related publications:

IJRR 2011,

RSS 2009,

ICRA 2009a,

ICRA 2009b,

ICRA 2008,

NIPS 2007,

RSS 2007]

Autonomous Driving

I worked with the Stanford Driving Team on applying machine learning algorithms to high-speed driving control. The goal of this project is to learn controllers that can handle much higher speeds and more aggressive driving maneuvers than possible with the current control system, which was developed for the (relatively low speed) driving in the DARPA Grand Challenge and Urban Challenge contests.

I worked with the Stanford Driving Team on applying machine learning algorithms to high-speed driving control. The goal of this project is to learn controllers that can handle much higher speeds and more aggressive driving maneuvers than possible with the current control system, which was developed for the (relatively low speed) driving in the DARPA Grand Challenge and Urban Challenge contests.

[Related publications:

IV 2011,

ICRA 2010]