|

I am a Ph.D. student in the Machine Learning Department at Carnegie Mellon University, where I am fortunate to be advised by Professor Andrej Risteski. Previously, I spent four wonderful years at the University of Illinois at Urbana-Champaign, completing my bachelor's degree in Statistics & Computer Science, as well as Mathematics. I am grateful for the mentorship of Professor Jiawei Han, Professor AJ Hildebrand, and Professor Pramod Viswanath. In the industry, I spent some exciting time at Google (summer 2023), ByteDance (TikTok) (summer 2022), Quora (2019-2020), and Facebook (summer 2018). Email / CV (resume) / LinkedIn / Google Scholar / Twitter / GitHub / Quora |

|

|

I study machine learning, natural language processing, and data mining. I currently work on (1) improving mathematical understanding of transformer-based language models (training dynamics, efficient sampling, mechanistic interpretability), and (2) developing principled approaches to self-supervised learning. |

|

In the following list, the asterisk symbol (*) in the author list means equal contribution or alphabetical order. |

|

Kaiyue Wen, Yuchen Li, Bingbin Liu, Andrej Risteski Conference on Neural Information Processing Systems (NeurIPS), 2023 bibtex / slides / Twitter summary / video recording of 15-minute talk (starts at 5:39:20) We show that interpretability claims based on isolated attention patterns or weight components can be (provably) misleading. Our results are based on next-token prediction on Dyck (i.e. balanced brackets). We show that Transformers (exactly or approximately) solving this task satisfy a structural characterization, “balance condition“, derived from ideas in formal languages (the pumping lemma). |

|

Yuchen Li, Yuanzhi Li, Andrej Risteski International Conference on Machine Learning (ICML), 2023 bibtex / slides / Twitter summary / project page / codes We analyze the optimization process of transformers trained on data involving "semantic structure", e.g. topic modeling. Through theoretical analysis and experiments, we show that between same-topic words, the embeddings should be more similar, and the average pairwise attention should be larger. In particular, we observe that with carefully chosen initialization and learning rate, the optimization process of self-attention can be approximately broken down into two stages: in stage 1, only the value matrix changes significantly; in stage 2, the key and query matrices catch up much later, even though all components are jointly optimized through standard SGD or Adam. This observation might be of independent interest, for future works on understanding the learning dynamics of transformers as well. |

|

Ashwini Pokle*, Jinjin Tian*, Yuchen Li*, Andrej Risteski Conference on Artificial Intelligence and Statistics (AISTATS), 2022 bibtex / Twitter summary We show through theoretical results and controlled experiments that even on simple data models, non-contrastive losses have a preponderance of non-collapsed bad minima (which do not exist for their contrastive loss counterpart). Moreover, we show that the training process does not avoid these minima. |

|

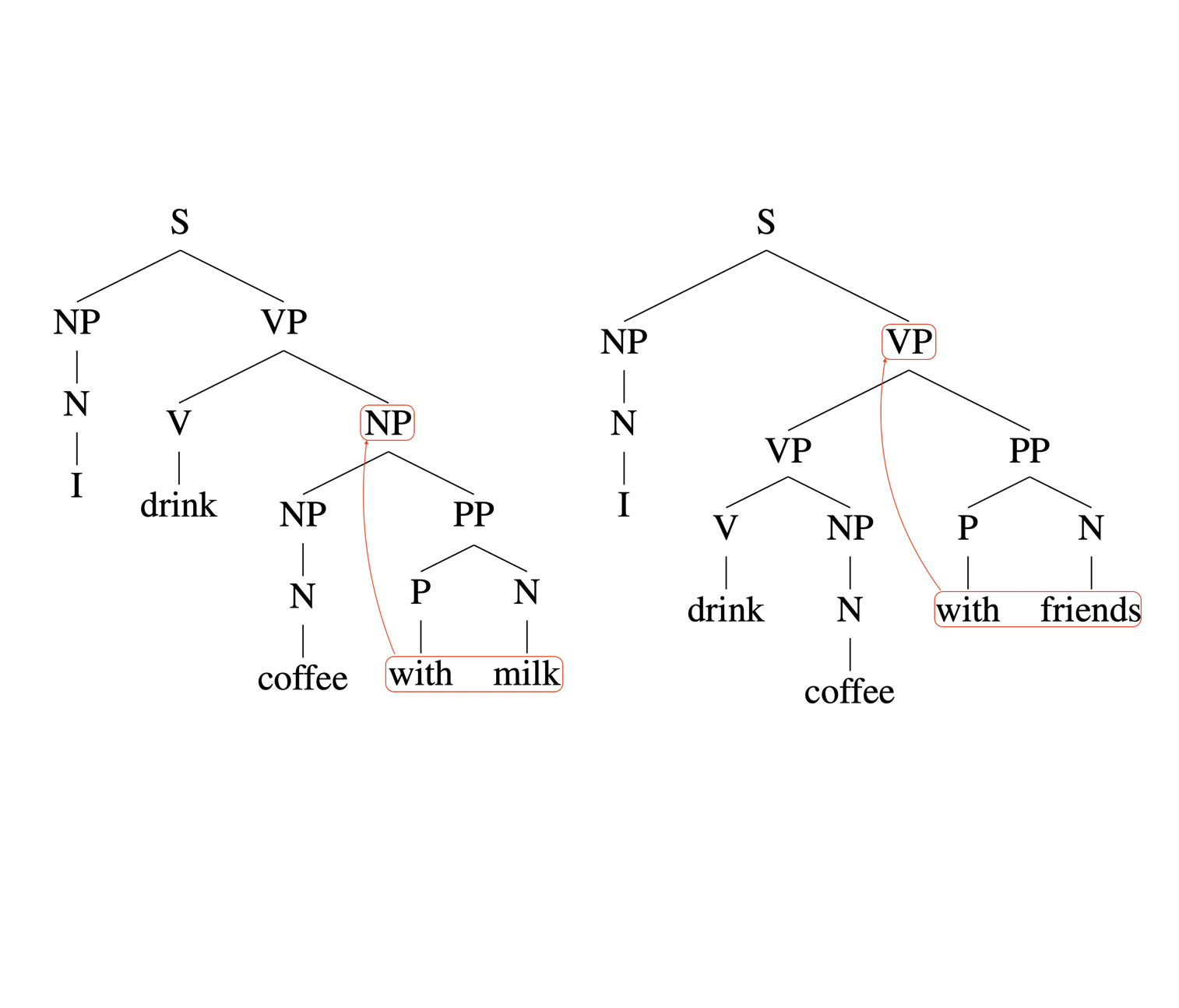

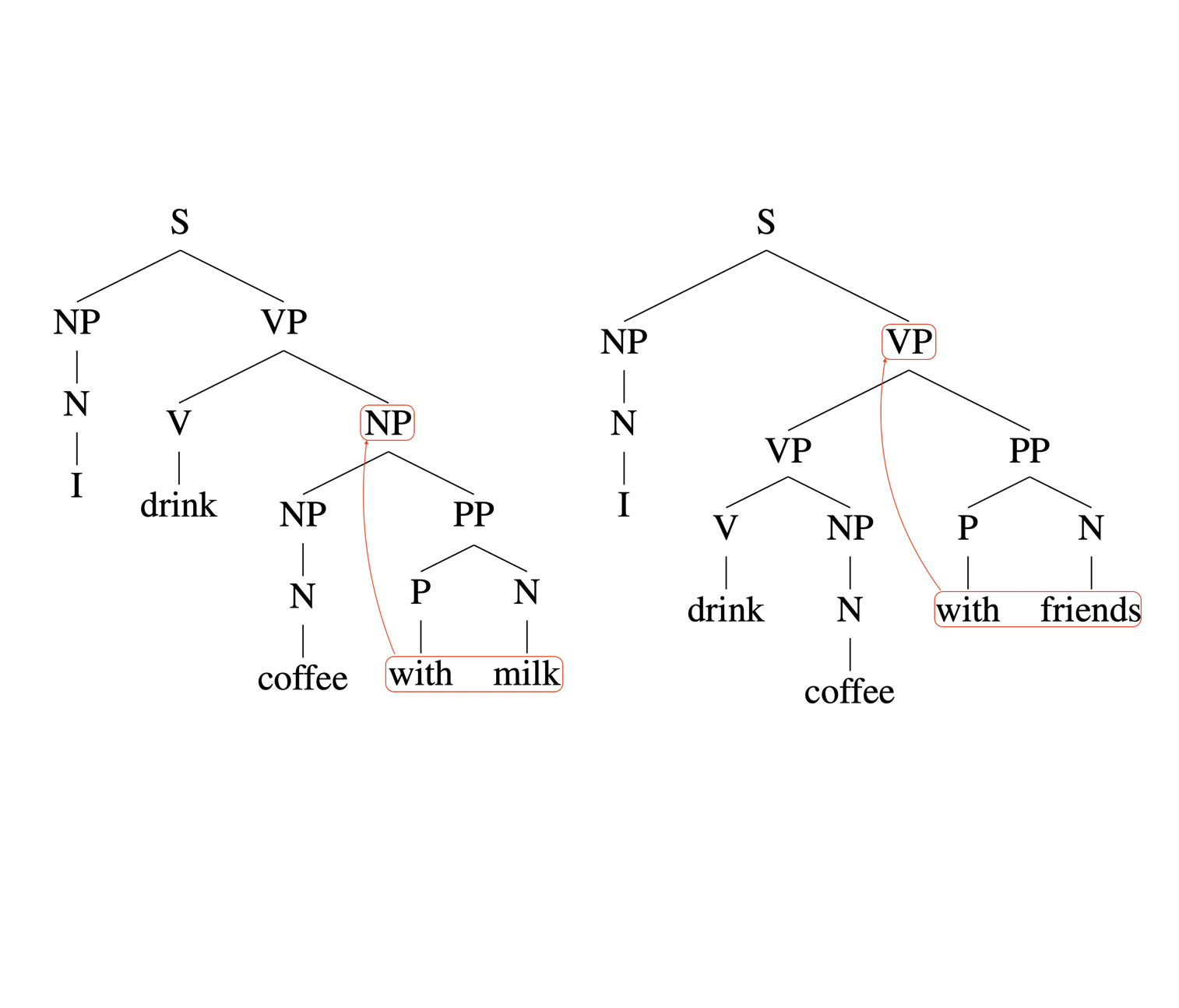

Yuchen Li, Andrej Risteski Association for Computational Linguistics (ACL), 2021 bibtex / slides / Twitter summary / Andrej's Tweet / blog post We prove that, if the context for the parsing decision at each word is unbounded & bidirectional, then the parsing model has full representational power. On the other hand, even if the context is bounded in one direction (which is the case for various leading approaches to constituency parsing), the parsing power is quite limited for hard grammars. |

|

Xinwei He*, AJ Hildebrand*, Yuchen Li*, Yunyi Zhang* Journal of Discrete Mathematics & Theoretical Computer Science (DMTCS), vol. 22 no. 1, Automata, Logic and Semantics, 2020 bibtex / poster Consider the sequence of leading digits of 2^n: 1, 2, 4, 8, 1, 3, 6, ...... What is the complexity of such sequences? They are neither periodic nor random. How to quantify and distinguish the middle ground? We prove that, the block complexity is linear for the sequence of leading digits of a^n in base b (except for some special cases). In fact, we give explicit formula for the linear coefficients in terms of a and b. |

|

Yu Shi*, Jiaming Shen*, Yuchen Li, Naijing Zhang, Xinwei He, Zhengzhi Lou, Qi Zhu, Matthew Walker, Myunghwan Kim, Jiawei Han Conference on Information and Knowledge Management (CIKM), 2019 bibtex This work discovers hypernym-hyponym ("is-a" relation) pairs by an innovative combination of information from natural language and graph structure. Our system outperforms existing methods that utilize signals from text, graph, or both. |

|

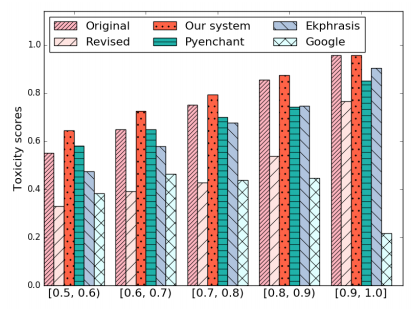

Hongyu Gong, Yuchen Li, Suma Bhat, Pramod Viswanath The Web Conference (WWW), 2019 bibtex Given a misspelled word, our algorithm generates candidates based on the surface form, represents the context as a linear space, and selects the candidate whose embedding is closest to the context subspace. The spelling correction accuracy is better than the state-of-the-art methods, improving the performance in downstream applications. |

|

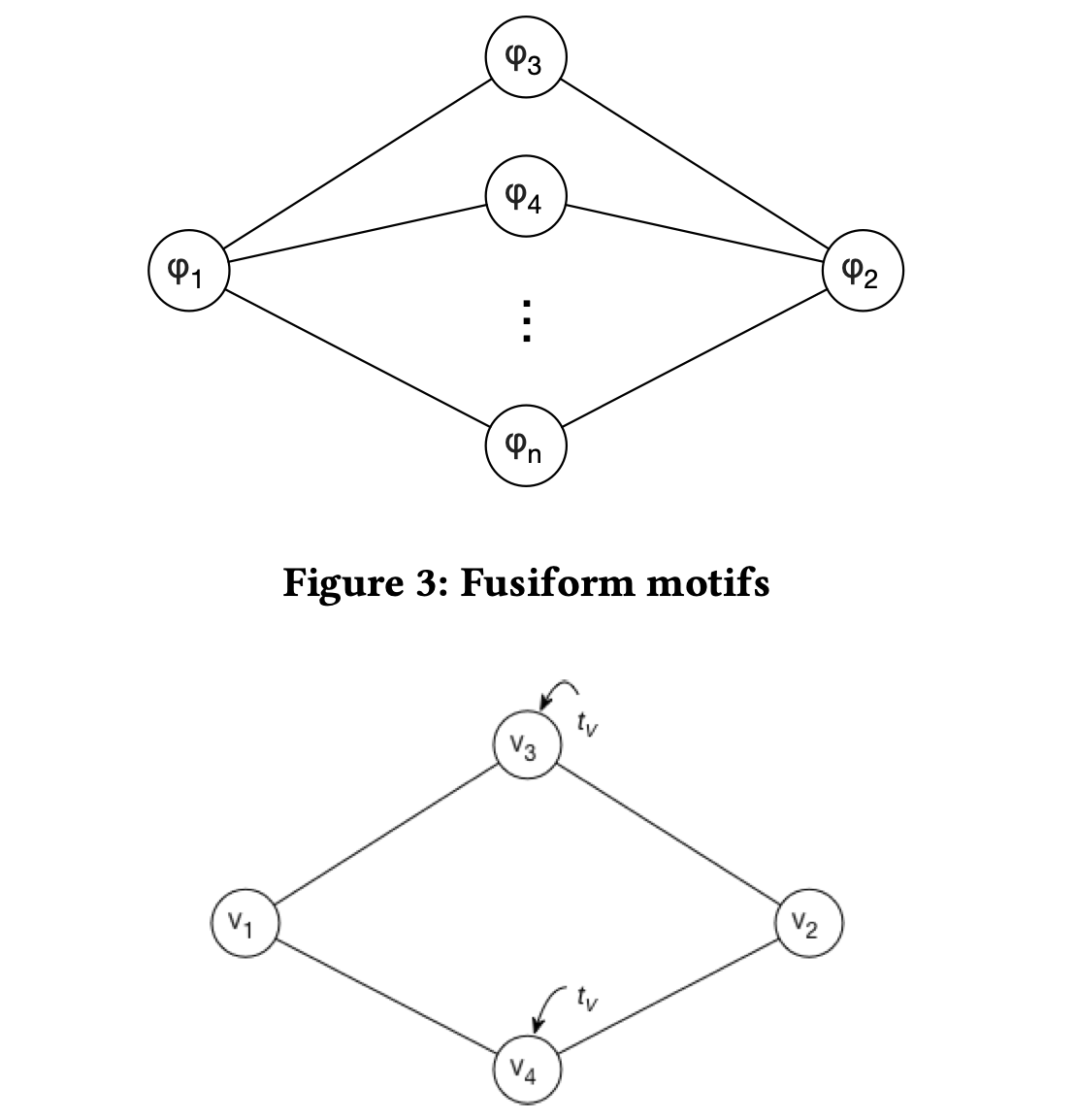

Yuchen Li*, Zhengzhi Lou*, Yu Shi, Jiawei Han Workshop on Mining and Learning with Graphs (MLG), in conjunction with KDD, 2018 bibtex This work shows the effectiveness of temporal motifs (frequent substructures) in quantifying the relationship between nodes in heterogeneous information networks, and proposes efficient algorithms for counting a family of useful motifs. |

|

|

|

slides / video recording of 15-minute talk (starts at 5:39:20) 2023-11 DeepMath Conference on the Mathematical Theory of Deep Neural Networks |

|

slides 2023-05 NeuLab, Language Technologies Institute (LTI), CMU 2023-04 University of Illinois at Urbana-Champaign (UIUC)’s Machine Learning Reading Group |

|

slides 2021-08 Association for Computational Linguistics (ACL) Conference 2021-07 NEC Laboratories Europe 2021-06 Approximately Correct Machine Intelligence (ACMI) Lab, CMU |

|

|

|

Role: Teaching Assistant Course Webpage: This URL Instructor: Nihar Shah |

|

Role: Teaching Assistant Course Webpage: This URL Instructors: Andrej Risteski and Hoda Heidari |

|

Template of this page: credit to: link. |