Designing Deep Networks for Surface Normal Estimation

People

Abstract

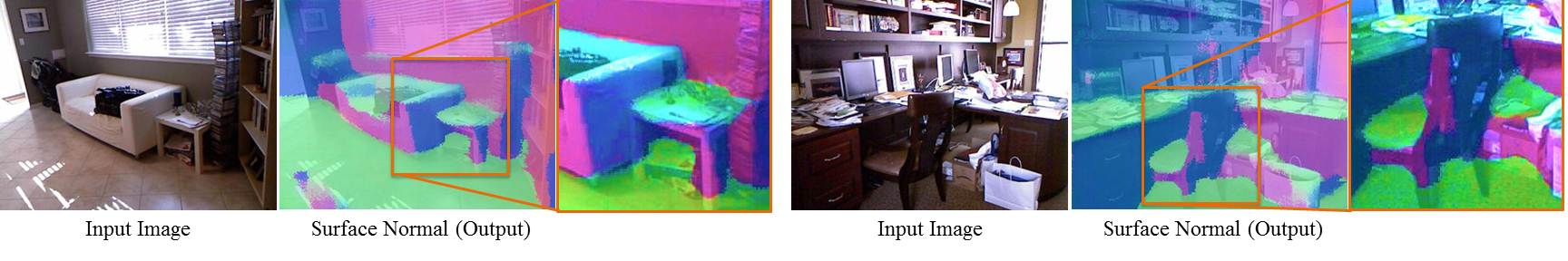

In the past few years, convolutional neural nets (CNN) have shown incredible promise for learning visual representations. In this paper, we use CNNs for the task of predicting surface normals from a single image. But what is the right architecture we should use? We propose to build upon the decades of hard work in 3D scene understanding, to design new CNN architecture for the task of surface normal estimation. We show by incorporating several constraints (man-made, manhattan world) and meaningful intermediate representations (room layout, edge labels) in the architecture leads to state of the art performance on surface normal estimation. We also show that our network is quite robust and show state of the art results on other datasets as well without any fine-tuning.

Paper

|

[pdf] [results for NYU Depth V2] [code and models] |