Myung Hwangbo, Ph.D.

|

Postdoctoral Fellow

Email: myung@cs.cmu.edu

Curriculum Vitae |

News

A paper, entitled Visible-Spectrum Gaze Tracking for Sports, will be presented at the CVPR workshop, 1st IEEE International Workshop on Computer Vision in Sports (CVsports).

I am going to join INTEL computer vision group (Hillsboro, Oregon) as a research scientist this summer.

Education

-

Ph.D. in Robotics, Robotics Institute, School of Computer Science, Carnegie Mellon University

- Advisor: Takeo Kanade

- Thesis: Vision-based Navigation of a Small Fixed-Wing Airplane in Urban Environment (dissertation)

-

M.S., System Design Division, Mechanical Engineering, Pohang University of Science and Technology (POSTECH), Korea

Advisor: Young-Il Yeom

Thesis: Kinematic Control and Design of a Wheeled Robot Robot

-

B.S., Mechanical Engineering, Pohang University of Science and Technology (POSTECH), Korea

Journal Publications

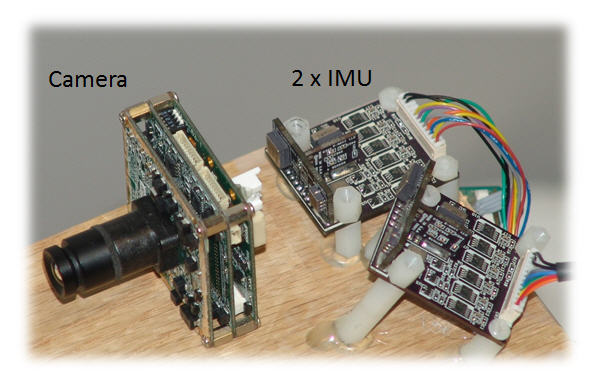

Myung Hwangbo, Jun-Sik Kim, and Takeo Kanade, IMU Self Calibration Using Factorization , IEEE transactions on Robotics , vol. 29, no. 2, pp. 493--507, Apr 2013. [doi]

Myung Hwangbo, Jun-Sik Kim, and Takeo Kanade, Gyro-Aided Feature Tracking for a Moving Camera: Fusion, Auto-Calibration and GPU Implementation , International Journal of Robotics Research , vol. 30, no. 14, pp. 1755--1774, Dec 2011. [doi]

- Jun-Sik Kim, Myung Hwangbo, and Takeo Kanade, Spherical Approximation for Multiple Cameras in Motion Estimation: its Applicability and Advantages, Computer Vision and Image Understanding, vol. 114, no. 10, pp. 1068--1083, Oct 2010. [doi]

Selected Conference Publications

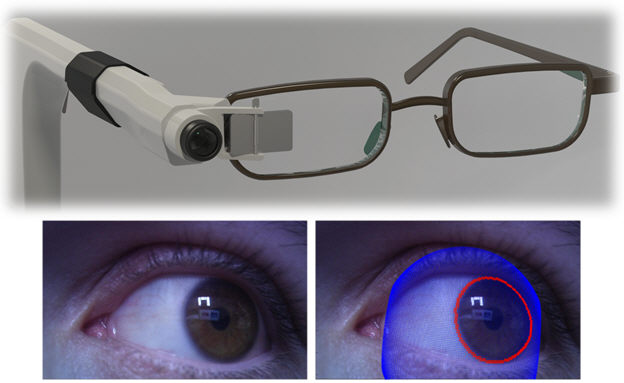

B. R. Pires, Myung Hwangbo, M. Devyver and T. Kanade, Visible-Spectrum Gaze Tracking for Sports , 1st IEEE Int'l Workshop on Computer Vision in Sports, CVPR'13 Workshop, Jun 2013 [doi]

M. Hwangbo and T. Kanade, Maneuver-Based Autonomous Navigation of a Small Fixed-Wing UAV, IEEE Int'l Conf. on Robotics and Automation (ICRA), May 2013

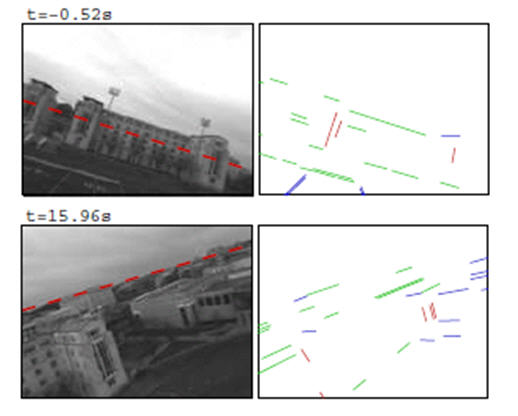

M. Hwangbo and T. Kanade, Visual-inertial UAV Attitude Estimation Using Urban Scene Regularities, IEEE Int'l Conf. on Robotics and Automation (ICRA), May 2011 [doi]

M. Hwangbo, J.-S. Kim, and T. Kanade, Inertial-aided KLT Feature Tracking for a Moving Camera, IEEE/RSJ Int'l Conf. on Intelligent Robots and Systems (IROS), Oct 2009 [doi]

J.-S. Kim, M. Hwangbo, and T. Kanade, Parallel Algorithms to a Parallel Hardware: Designing Vision Algorithms for a GPU, 5th IEEE Workshop on Embedded Computer Vision, ICCV'09 Workshop, Sept 2009 [doi]

J.-S. Kim, M. Hwangbo, and T. Kanade, Realtime Affine-photometric KLT Feature Tracker on GPU in CUDA Framework, 5th IEEE Workshop on Embedded Computer Vision, ICCV'09 Workshop, Sept 2009 [doi]

M. Hwangbo and T. Kanade, Factorization-based Calibration Method for MEMS Inertial Measurement Unit, IEEE Int'l Conf. on Robotics and Automation (ICRA), May 2008 [doi]

J.-S. Kim, M. Hwangbo, and T. Kanade, Motion Estimation Using Multiple Non-overlapping Cameras for Small Unmanned Aerial Vehicles, IEEE Int'l Conf. on Robotics and Automation (ICRA), May 2008 [doi]

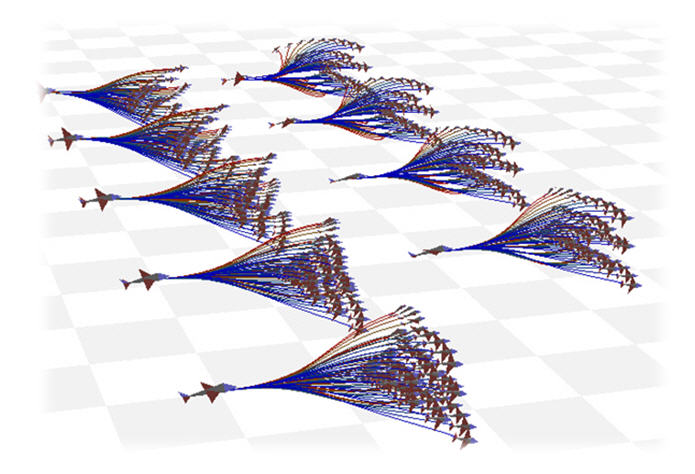

M. Hwangbo, J. Kuffner, and T. Kanade, Efficient Two-phase 3D Motion Planning for Small Fixed-wing UAVs, IEEE Int'l Conf. on Robotics and Automation (ICRA), Apr 2007 [doi]

S. Roth, B. Hamner, S. Singh, and M. Hwangbo, Results in Combined Route Traversal and Collision Avoidance, Int'l Conf. on Field and Service Robotics (FSR), Apr 2005

D. H. Shin, B. Hamner, S. Singh, and M. Hwangbo, Motion Planning for a Mobile Manipulator with Imprecise Locomotion, IEEE/RSJ Int'l Conf. on Intelligent Robots and Systems (IROS), Oct 2003

N. X. Dao, B.-J. You, S.-R. Oh, and M. Hwangbo, Visual Self-Localization for Indoor Mobile Robots using Natural Lines, IEEE/RSJ Int'l Conf. on Intelligent Robots and Systems (IROS), Oct 2003

B.-J. You, M. Hwangbo, S.-O. Lee, and S.-R. Oh, Y. D. Kwon, and S. Lim, Development of a Home Service Robot ISSAC, IEEE/RSJ Int'l Conf. on Intelligent Robots and Systems (IROS), 2003

Projects

|

First Person Vision & Gaze Detection First Person Vision (FPV) is a transformative system that can monitor, record and assist people in their daily lives at work or at play in a truly symbiotic manner. Our FPV device captures a person's full field of vision and specific gaze-based intent to provide the user with intelligent cues and guidance, personal assistance, training, information or entertainment. [CVSPORTS'13] |

|

3D Air Slalom: Vision-based Autonomous UAV Navigation A 1.2 meter small fixed-wing, a beginner-level model airplane, is competently transformed into an autonomous airplane. The plane is equipped with a camera, IMU, and GPS and loaded with a vision-based autonomous navigation algorithm on board. The camera detected multiple colored targets on the ground and the airplane completed the task sequentially with assigned directions. Since this task requires the UAV to complete a challenging slalom course with assigned directions, we call it "3D Air Slalom". [Dissertation, ICRA'13] |

|

Motion Planning of a Small Fixed-Wing UAV A small fixed-wing airplane inherently suffers from poor agility in motion and motion uncertainty in windy conditions. We developed a new motion planner that always returns a feasible motion sequence that the airplane can execute. Rather than finding the shortest path, the planner instead maximizes the chances of reaching the target, which is proven to be a more promising method for an error-prone small and lightweight drone. [ICRA'13, ICRA'07] |

|

Visual-Inertial UAV Attitude Estimation A new sensor-fusion method that combines camera and inertial sensor data (accelerometers and gyroscopes) is developed for realtime airplane attitude (roll and pitch) estimation. Urban scenes are depicted as images where vertical and horizontal edges appear on many man-made structures and these line edges can be used as visual cues for the vehicle attitude. [ICRA'11] |

|

IMU Self-Calibration A brand-new IMU calibration method is developed for in-field automatic calibration with no artificial apparatus. Natural force (gravity) or landmarks(distant scene objects) are sufficient for the calibration input. The existence of linear solutions for any IMU types is uncovered and illustrated using the factorization method which is well known in computer vision. [TRO'13] |

|

Gyro-assisted KLT Feature Tracking

[open source is available] When a camera rotates rapidly or shakes severely, a conventional KLT (Kanade-Lucas-Tomasi) feature tracker becomes vulnerable to large inter-image appearance changes. Tracking fails in the KLT optimization step, mainly due to an inadequate initial condition equal to final image warping in the previous frame. Gyroscopes can greatly help the KLT tracker so that the result remains robust under fast camera-ego rotations. GPU implementation tracks 1000 features at a video rate. [IJRR'11, ICCVW'09b] |

Videos

| Visual-Inertial UAV Attitude Estimation [ICRA'11] This video shows a driftless attitude estimation result of a small UAV operating in the urban area. A RANSAC-based line classifier partitioned the line segments into vertical, horizontal, or outlier line groups by multiple vanishing point (VP) detection. Gyroscopes predict an instantaneous rotation change. Each line associated with either the vertical or horizontal VP is separately used to update the posterior attitude estimate in an Extended Kalman filter. | |

| 3D Air Slalom: Vision-based Autonomous UAV Navigation [ICRA'13] Two ground targets are arbitarily placed on the ground (each target consists of four squares and the blue square indicates the goal direction). First the UAV fly around to search all the targets and identify the entering direction by their color arrangement. The motion planner then guides the UAV to reach each target at its correct direction. | |

| Gyro-aided KLT Feature Tracking [IJRR'11] A low-cost IMU (~$100) is attached to the camera. We intentionally shake the camera with left-right, up-down, and random rotations. See how much our gyro-aided KLT works better than a conventional KLT. The graph at the bottom shows the number of successfully tracked features for both methods (the red is the gyro-aided method and black is the image-only method) as well as corresponding gyroscope data. The red curve is almost consistent regardless of camera motions. | |

| Visual Odometry [CVIU'10] The camera/IMU are placed on the top of a car. We drive the car around a parking lot. Our sequential SFM (Structure-from-Motion) reconstructs the car trajectory and 3D scene structure in realtime. My gyro-aided KLT is also used to provide the robust feature track input to the SFM. |