|

Generalized N-body Problems

| |

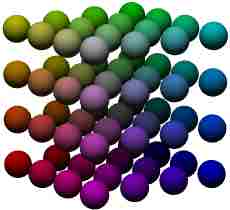

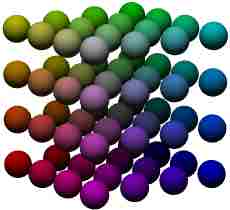

In my thesis, I unified for the first time a large class

of problems which I call generalized N-body problems. These

problems have the essential structure of the Coulombic N-body

problem of computational physics, consisting of decomposable operators

(like summation or maximization) on evaluations of certain kernel

functions on n-tuples of points in a D-dimensional metric space.

All of the problems listed in this column, and many other important problems

not listed (I could list at least 50), are examples of such problems.

The first (and very brief) paper discussing much of the big picture

was (Gray and Moore,

'N-Body' Problems in Statistical Learning [pdf]

[ps],

presented orally at NIPS 2000), but is now outdated.

The full story is much longer and is

still unfolding at a fast pace, so it will eventually appear probably in

monograph (focused book) form in the near future. Individual results

will also appear in journal form very soon.

|

|

|

|

What makes the problem class interesting is that is possible to devise

a unified solution approach for this class, as shown in my thesis. It

is based on the introduced principle of higher-order

divide-and-conquer, or divide-and-conquer over multiple sets (a

principle which is actually more general than what is needed here) --

in this case over multiple space-partitioning data structures. I

utilize the best data structures for

proximity search, which are data-adaptive, unlike the typical

structures of computational physics. My way of using such structures

yields strict error bounds in non-exact cases. I used this general

strategy to develop new algorithms, which I call dual-tree or

more generally multi-tree methods. Each method, listed in this

column, demonstrates how the same basic algorithmic methodology

specializes to each problem. In addition to passing an empirical test

versus the best existing methods, most of the algorithms here have

been heavily used in significant applications.

|

|

|

|

|

|

Kernel Density Estimation

| |

∀ xq ∑r

Kh(|| xq - xr ||)

Kernel density estimation is the canonical statistical method for the

fundamental problem of estimating general probability densities

(i.e. without distribution assumptions), but its intractability

has limited its use since its introduction in the 1950's. Our method

for this problem can be extended to solve local polynomial

regression problems.

|

|

|

|

Our dual-tree algorithm, utilizing a simple finite-difference scheme,

turns out to be faster than all previous proposals for the same

accuracy, including the FFT and the fast gauss transform, dramatically

so beyond 2 or 3 dimensions. It works for all common kernel

functions, can perform multiple bandwidths simultaneously for faster

cross-validation, provides hard error bounds, and requires no

parameter tweaking. (Gray and Moore, Nonparametric Density

Estimation: Toward Computational Tractability, SIAM Data Mining

2003 [winner of Best Algorithms Paper Prize]. Gray and Moore,

Rapid Evaluation of Multiple Density Models [pdf],

[ps] AI & Statistics 2003. Final journal version

coming very soon.)

|

|

|

|

n-point Correlation Functions

| |

∑q ∑r ∑s

I∇(xq, xr, xs)

The n-point correlation functions form the foundation of

spatial statistics, and constitute the principal means by which

cosmologists have validated the best existing cosmological models with

respect to observations since the 1960's. Their naive computational

cost is O(Nn). The n-point

correlation functions are also used heavily in statistical physics and

other areas of physics. The fractal dimension is isomorphic

to the 2-point correlation.

|

|

|

|

Our n-tree algorithm is the first algorithm to dramatically reduce the

cost, by up to seven orders of magnitude in practice, while returning

the exact answer. This has made higher-order correlations on large

modern datasets, which are necessary for cosmological studies, possible for the first

time. (First described in the NIPS 2000 paper. Moore et al., Fast Algorithms

and Efficient Statistics: n-point Correlation Functions [pdf] [ps], Mining

the Sky 2000. Fast Computation of the Pair Correlation and

n-point Correlation Functions, Conf. Computational Physics

2004.) We have also developed a Monte

Carlo algorithm which is faster for large radii. Final journal version

coming very soon.

|

|

|

∀ xq argminr

|| xq - xr ||

The all-nearest-neighbors problem asks, for each of a set of query

points, what is its nearest neighbor among a set of reference points.

In the more general bichromatic version, the query set and reference

set may be different. Aside from being a classical challenge problem

of computational geometry, this is often the real problem of interest

in practice, not the single-query problem.

|

|

|

|

Our simple dual-recursive depth-first-search algorithm turns out to be

faster than the best previous algorithms in practice, including

Vaidya's algorithm and the well-separated pair decomposition. It is

exact, solves the more general bichromatic problem, works for general

k, and as with all of our algorithms on this page, it works

with virtually any space-partitioning tree. (First described in the

NIPS 2000 paper. Full experimental results and detailed description

will appear in the paper associated with the Proximity Project hopefully sometime in

2005.)

|

|

|

|

Nonparametric Bayes Classification

| |

∀ xq max{

P(C1)ph(xq|C1),

P(C2)ph(xq|C2)}

|

Nonparametric density estimation used within the Bayes decision

framework provides simple nonlinear Bayes-optimal classification without

parametric assumptions, and, less known, performance comparable to the

state of the art in practice. Our method for this problem can also be

used for support vector machine prediction -- however, in that

context we only achieved speedups over the naive method of about 2-3. I

therefore consider this methodology to be a failure for that problem.

|

|

|

|

Our algorithm for this problem utilizes three trees for a 2-class

problem, and two Fibonnaci-heap priority queues. It is exact

-- this is the reward for not simply performing one approximate

kernel summation for each class.

It allows the nonparametric Bayes classifier to

scale to massive training and test sets, unlike methods like SVM's,

and has been used with high accuracy in a large-scale quasar detection problem and a drug discovery problem. (Though these

applications of the algorithm have been published, the algorithm

itself has not, and will be submitted in 2005.)

|

|

|

|

Gaussian Process Regression

| |

Φ-1y, Φu

|

Gaussian process regression is a Bayesian regression method, related

to the method of kriging, whose principal required computation is the

inversion of an N×N matrix. Gaussian process

regression is an example of a more general situation within

statistics/machine learning of a kernel matrix-vector multiplication.

|

|

|

|

In this case it occurs inside Skilling's matrix inversion method. An

extension of our algorithm for kernel density estimation can be used

for this. A preliminary demonstration of this for a 1-million-point

dataset is in (Gray, Fast Kernel Matrix-Vector Multiplication with

Application to Gaussian Process Regression [pdf], [ps] CMU tech report 2004). I consider this work

incomplete though, because the validation was only done on one dataset

and I would like to see the method used by a GP expert in a real application.

|

|