As in Section 3, working with a modified representation allows certain inference techniques that are not applicable in the Boolean case.

As an example, suppose that we are resolving

There are two new difficulties that arise when we implement these

ideas. The first is a consequence of the fact that resolution can be

ambiguously defined for two quantified clauses. Consider resolving

In practice, however, this need not be a problem:

Proof. If there is more than one pair of resolving literals in ![]() and

and ![]() the result of the resolution will be vacuous, so we can

assume that there is a single literal

the result of the resolution will be vacuous, so we can

assume that there is a single literal ![]() in

in ![]() with

with ![]() in

in

![]() . If

. If ![]() is the

is the ![]() th literal in

th literal in ![]() and

and ![]() the

the ![]() th

literal in

th

literal in ![]() , it follows that we can resolve the original

, it follows that we can resolve the original ![]() and

and ![]() by unifying the

by unifying the ![]() th literal in

th literal in ![]() and the

and the ![]() th literal

in

th literal

in ![]() . It is clear that this resolution will be a generalization

of

. It is clear that this resolution will be a generalization

of ![]() .

.

![]()

What this suggests is that the reasons being associated with literal values be not the lifted nogoods that are retained as clauses, but ground instances thereof that were initially used to prune the search space and can subsequently be used to break ambiguities in learning.

The second difficulty is far more substantial. Suppose that

we have the axiom

Now suppose that we are trying to prove ![]() for an

for an

![]() and a

and a ![]() that are ``far apart'' given the skeleton

of the relation

that are ``far apart'' given the skeleton

of the relation ![]() that we already know. It is possible that we

use resolution to derive

that we already know. It is possible that we

use resolution to derive

The problem is that if ![]() is the size of our domain, (51) will

have

is the size of our domain, (51) will

have ![]() ground instances and is in danger of overwhelming our unit propagation algorithm even in the presence of reasonably sophisticated subsearch

techniques. Some technique needs to be adopted to ensure that this

difficulty is sidestepped in practice. One way to do this is to learn

not the fully general (51), but a partially bound instance that

has fewer ground instances.

ground instances and is in danger of overwhelming our unit propagation algorithm even in the presence of reasonably sophisticated subsearch

techniques. Some technique needs to be adopted to ensure that this

difficulty is sidestepped in practice. One way to do this is to learn

not the fully general (51), but a partially bound instance that

has fewer ground instances.

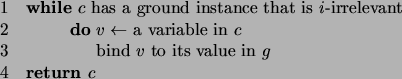

We may still learn a nogood with an exponential number of ground

instances, but at least have some reason to believe that each of these

instances will be useful in pruning subsequent search. Note that

there is a subsearch component to Procedure 5.5, since we

need to find ground instances of ![]() that are irrelevant. This cost

is incurred only once when the clause is learned, however, and not at

every unit propagation or other use.

that are irrelevant. This cost

is incurred only once when the clause is learned, however, and not at

every unit propagation or other use.

It might seem more natural to learn the general (51), but to modify the subsearch algorithm used in unit propagation so that only a subset of the candidate clauses is considered. As above, the most natural approach would likely be to restrict the subsearch to clauses of a particular irrelevance or better. Unfortunately, this won't help, since irrelevant clauses cannot be unit. Restricting the subsearch to relevant clauses is no more useful in practice than requiring that any search algorithm expand only successful nodes.

Before moving on, let us note that a similar phenomenon occurs in the

pseudo-Boolean case. Suppose we have a partial assignment

![]() and constraints

and constraints

The conflict set here is easily seen to be

![]() ,

and this is indeed prohibited by the derived constraint (54). But

(54) eliminates some additional bad assignments as well, such as

,

and this is indeed prohibited by the derived constraint (54). But

(54) eliminates some additional bad assignments as well, such as

![]() . Just as in the lifted case, we have learned

something about a portion of the search space that has yet to be

examined.

. Just as in the lifted case, we have learned

something about a portion of the search space that has yet to be

examined.