Detecting Boundaries for Segmentation and Recognition

People

Martial Hebert

Description

Shape is a powerful visual cue for recognizing objects in images, segmenting images into regions corresponding to individual objects, and, more generally, understanding the 3D structures of scenes. However, to be able to exploit shape information, we need reliable ways of detecting fragments of object boundaries, a difficult problem in itself. In This project investigates possible solutions to these problems. Specifically, it explores ways to reliably detect occluding boundaries by focusing on the question of combining motion cues with appearance cues for better detection of occlusion boundaries. In addition, the project explores different ways in which boundaries can be used in key vision tasks by investigating the integration of boundary information in segmentation and category recognition.

The project generates advances in two areas: 1)

detecting boundary information from images

as shape cues and 2) using boundary

information in segmentation and recognition

tasks. In the first area, it extends the

current approaches for contour detection to include motion cues. This is

motivated by the availability of temporal information in many practical

applications. Assuming that we can extract boundary fragments, the issue of

using them effectively in segmentation and recognition remains an open question.

The project will make substantial contributions toward answering that question,

including integrating boundary information with image segmentation and

recognition algorithms.

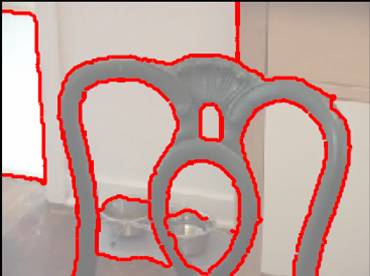

The first step in the estimation of occlusing boundaries is the computation of local cues that can be used for discriminating between occluding and non-occluding boundaries. Two cues are combined. The first one is computed from local appearance only using Martin's Pb detector. The second one is computed from local motion information, i.e., features computed from the difference between motion statistics on either side of each tentative edge fragment. This estimation is "local" in that it does not linking the individual contour elements and it does enforce any global consistency constraints.

|

|

| Movie | Movie |

One use of the estimated boundaries is in combination with segmentation algorithms. For example, the figure below shows the comparison between using raw contour data and estimated boundary in a standard segmentation algorithm. More generally, the boundaries can be used for segmenting foreground object from background.

After foreground/background segmentation, it is possible in principle to use the pixels near the edge to construct appearance models. The appearance models could be as simple as color histograms, for example. Once such a model is obtained, we can leverage the numerous recent advances in interactive image and video segmentation and matting. The hand-labeled foreground and background pixels provided by the user in these approaches specify the foreground and background models to drive the segmentation. But if we provide those constraints in an automatic fashion by using our detected occlusion boundaries and their associated notion of foreground and background, the result would be a fully automatic segmentation of objects in the scene. Starting with the input scene in the upper left and moving to the right, we could first extract edges, followed by classifying those edges into surface markings (black) and occlusion boundaries (white). In addition, it is possible, in principle, to detect which side of the occlusion boundaries are foreground, as indicated by the blue arrows.

Object recognition is another area where the use of boundary information is crucial, but not fully exploited. Many object recognition approaches rely on appearance features computed by aggregating image information within local patches. One issue with these approaches is that the patches may cross object boundaries, resulting in many unusable large-scale features which contain information from objects and background. A more problematic issue is that, since the local features are essentially convenient means of representing the local texture, they are far less discriminative for objects that are characterized primarily by their shape. This has been addressed recently by using recognition techniques that use contour fragments instead of regional descriptors. This addresses part of the problem, but a remaining issue is that many of the contour fragments may be irrelevant if they correspond to spurious intra-category variations on the appearance of the object, rather than capturing useful shape information. Using boundaries should, in principle, force the model to focus on those fragments that capture shape. We are working to combine a category recognition approach with the boundary detection techniques. Our proposed recognition approach supports semi-supervised category learning and it can operate directly from contour fragments. Importantly, the recognition approach can also incorporate other regional features based on appearance. Therefore, as before, we do not advocate that boundaries or contours alone are sufficient for recognition. Our more limited goal is to show how they can be used effectively to exploit shape information in a category recognition setting.

In addition, we are exploring the use of boundaries as a bridge between segmentation and recognition for generating candidate object locations in an input image. Many recognition approaches operate from a database of known categories and features on which they have been trained. The system then functions in a top-down manner, trying to find model features and deciding (via some spatial reasoning, for example) whether a particular object exists at a particular location. On the other hand, a system that uses bottom-up cues from boundaries to reason about the existence of an object (that is, any generic object) within the scene could first propose locations of potential objects, as a cueing mechanism, thereby directing the recognition scheme to the most fruitful locations within the scene and removing surrounding background clutter from consideration. In addition, the ability of extracting potential objects from a scene automatically may have implications for unsupervised learning and discovery of novel objects, since each new object would not necessarily need to be manually extracted from its environment. This could potentially also allow for simultaneous in situ learning of objects and their context.

Data Set

The data set used in the first two references below is now available, including video clips, ground truth labels, and reference frames. Feel free to use this data set. Please acknowledge the first reference below if you use the data set in published work.

References

A. Stein, D. Hoiem, and M. Hebert, Learning to Find Object Boundaries Using Motion Cues. IEEE International Conference on Computer Vision (ICCV), October, 2007.

A. Stein and M. Hebert, Combining Local Appearance and Motion Cues for Occlusion Boundary Detection. British Machine Vision Conference (BMVC), September, 2007.

A. Stein and M. Hebert, Local Detection of Occlusion Boundaries in Video.

British Machine Vision Conference, September, 2006.

A. Stein and M. Hebert, Using Spatio-Temporal Patches for Simultaneous Estimation of Edge Strength, Orientation, and Motion. Proc. Beyond Patches Workshop, IEEE Conference on Computer Vision and Pattern Recognition, June, 2006.

Funding

This research is supported by:

- NSF Grant IIS-0713406

- KIST